GIST - Multimodal Knowledge Extraction and Spatial Grounding

2026 - In Submission

Team members:

Shivendra Agrawal, Bradley Hayes

Paper

2026

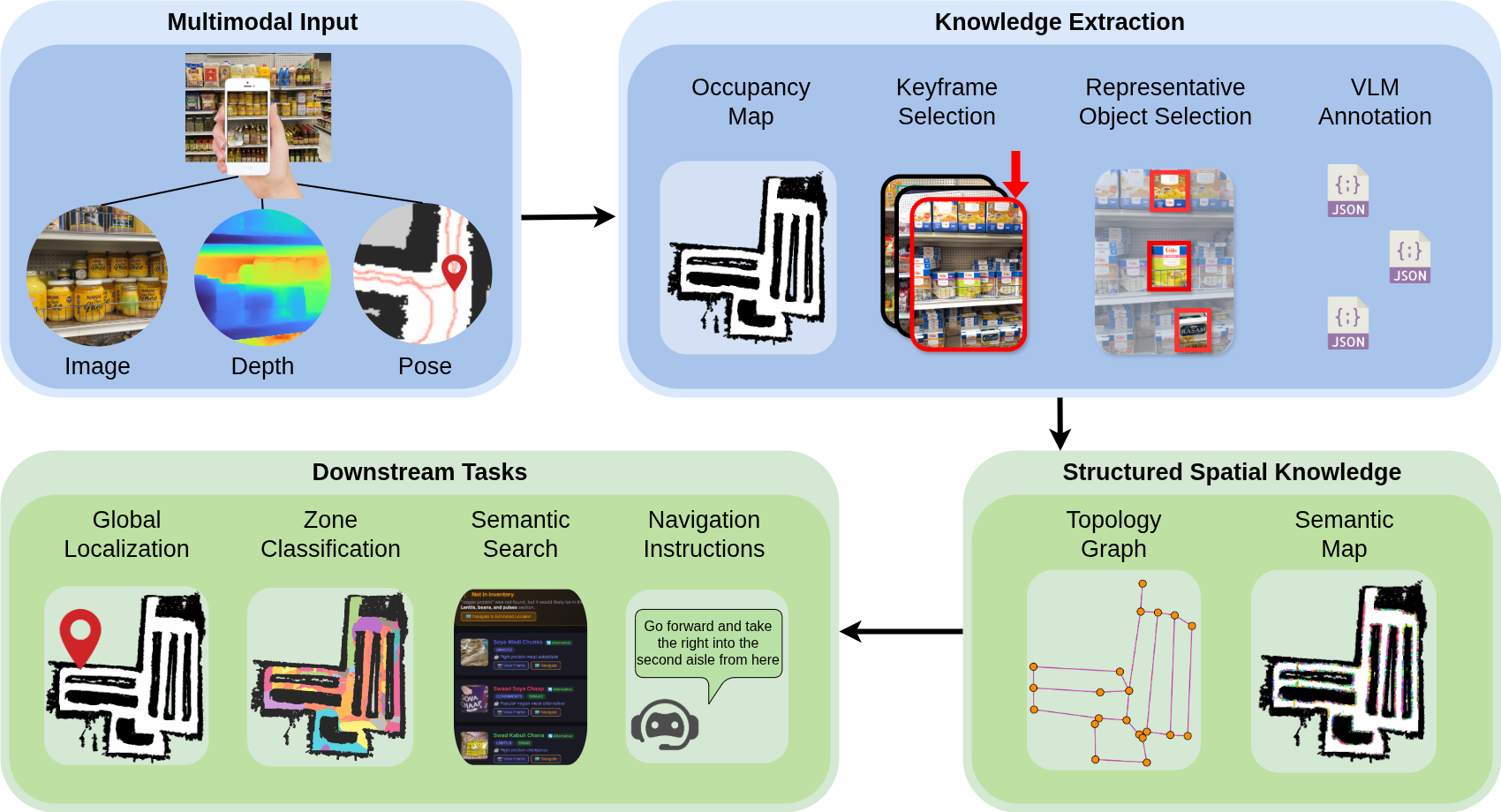

The GIST Multimodal Knowledge Extraction Architecture transforms raw multimodal inputs into Structured Spatial Knowledge.

Abstract

Navigating and searching complex, densely packed environments such as retail stores, warehouses, libraries, and hospitals poses a great spatial grounding challenge for both humans and embodied AI. We present GIST (Grounded Intelligent Semantic Topology), a multimodal knowledge extraction pipeline that transforms a consumer-grade mobile point cloud into a semantically annotated navigation topology.

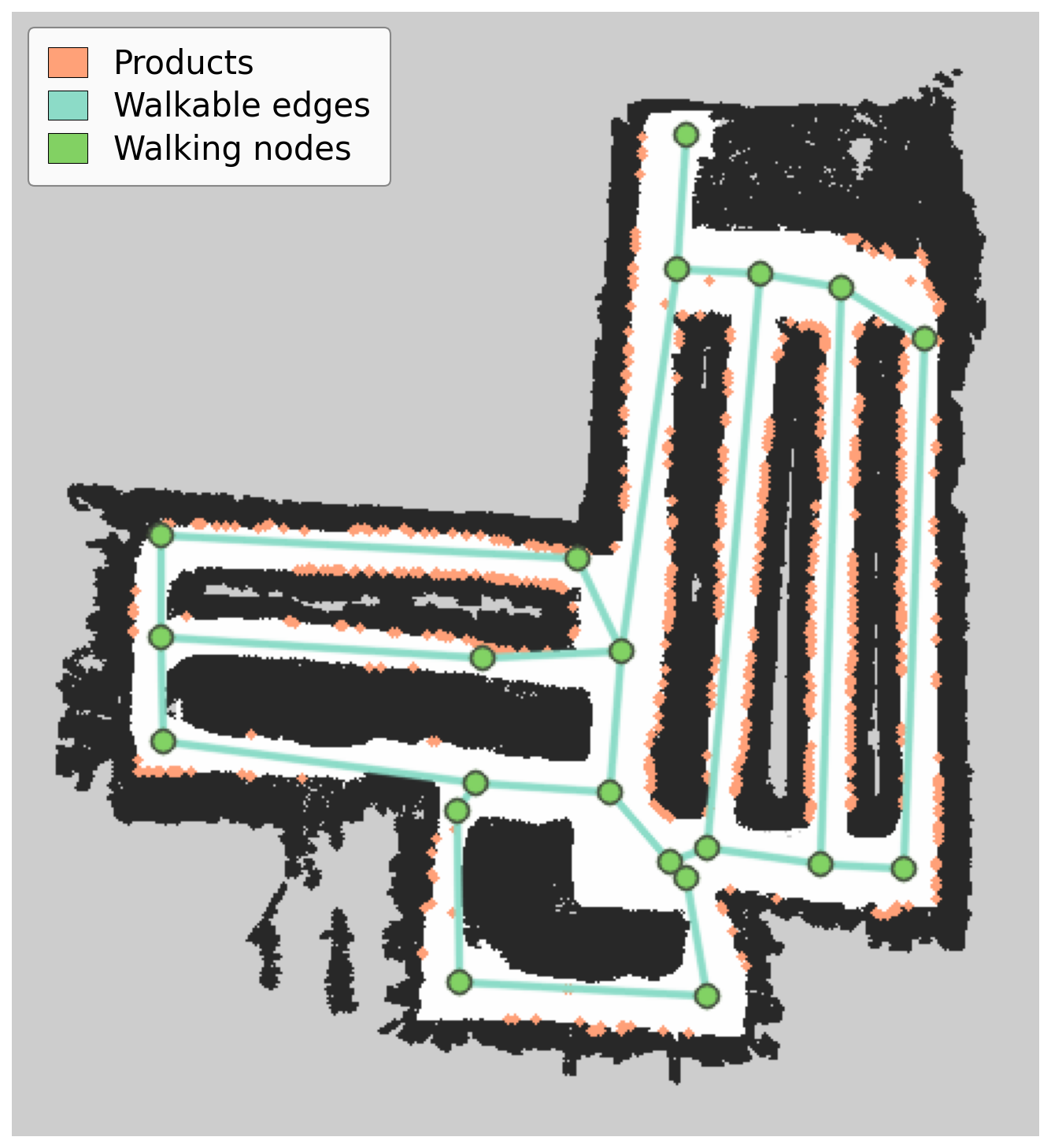

GIST Semantic Topology: The skeletonization-derived graph providing walking nodes and traversable edges, overlaid with localized products.

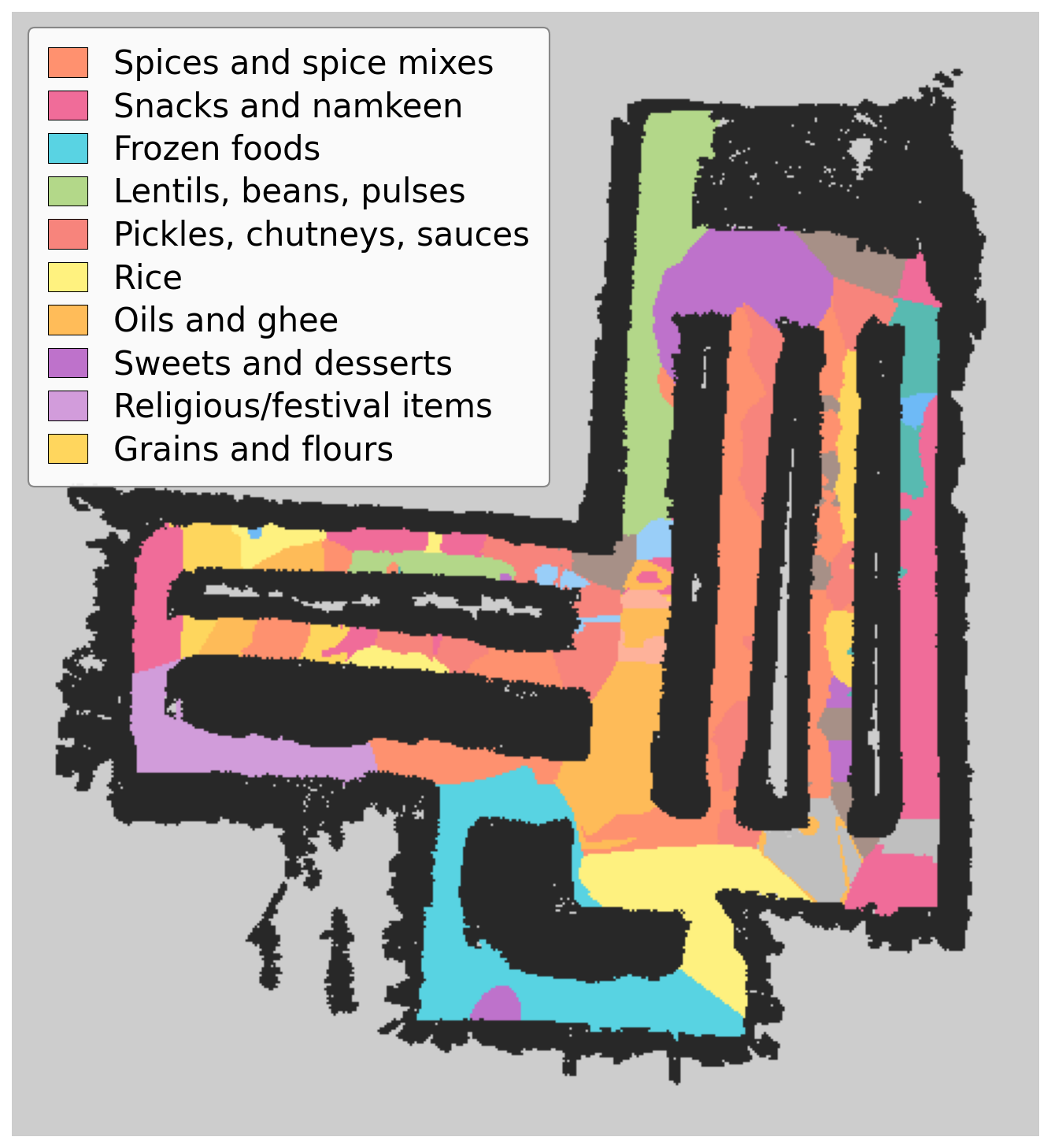

1. Semantic Zone Classification

Free-space pixels are assigned to semantic zones via KD-tree voting over localized product positions. This continuous overlay reveals latent spatial patterns autonomously.

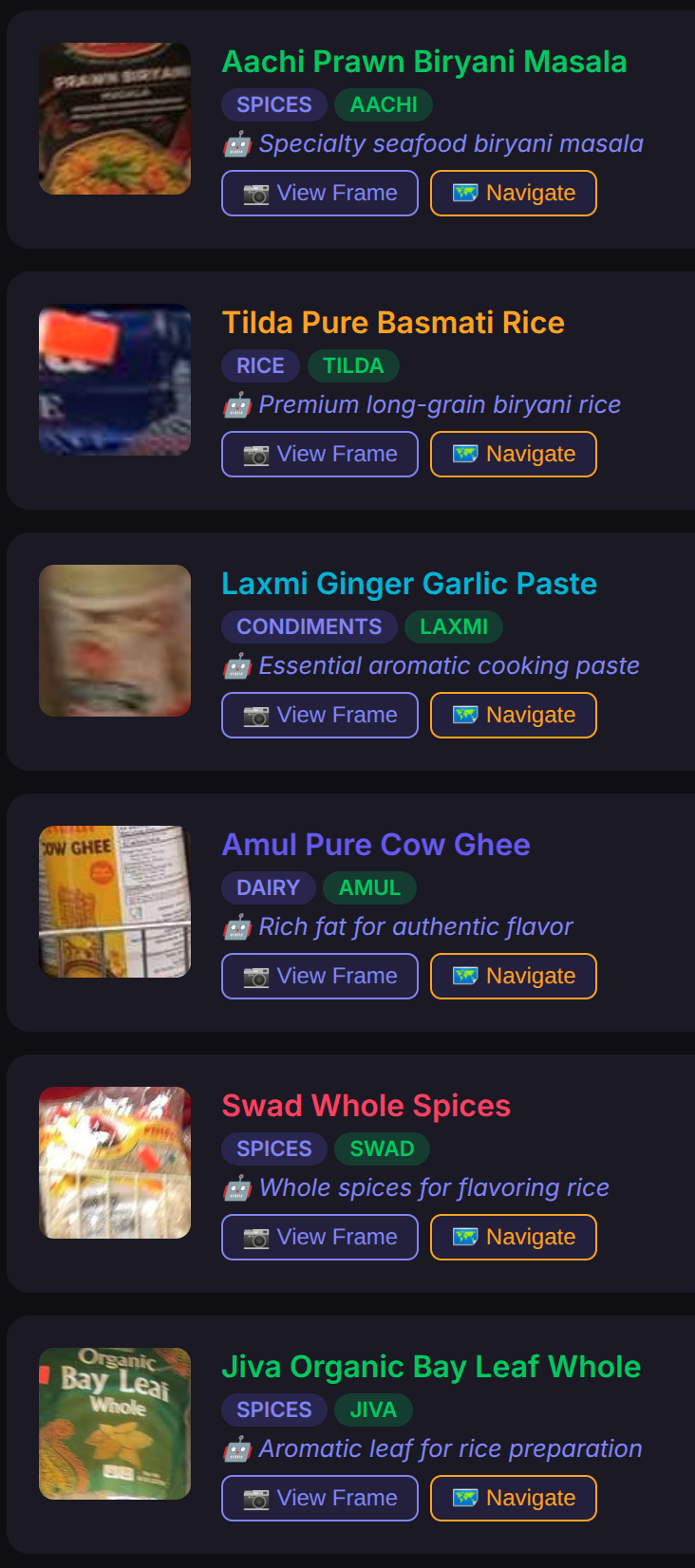

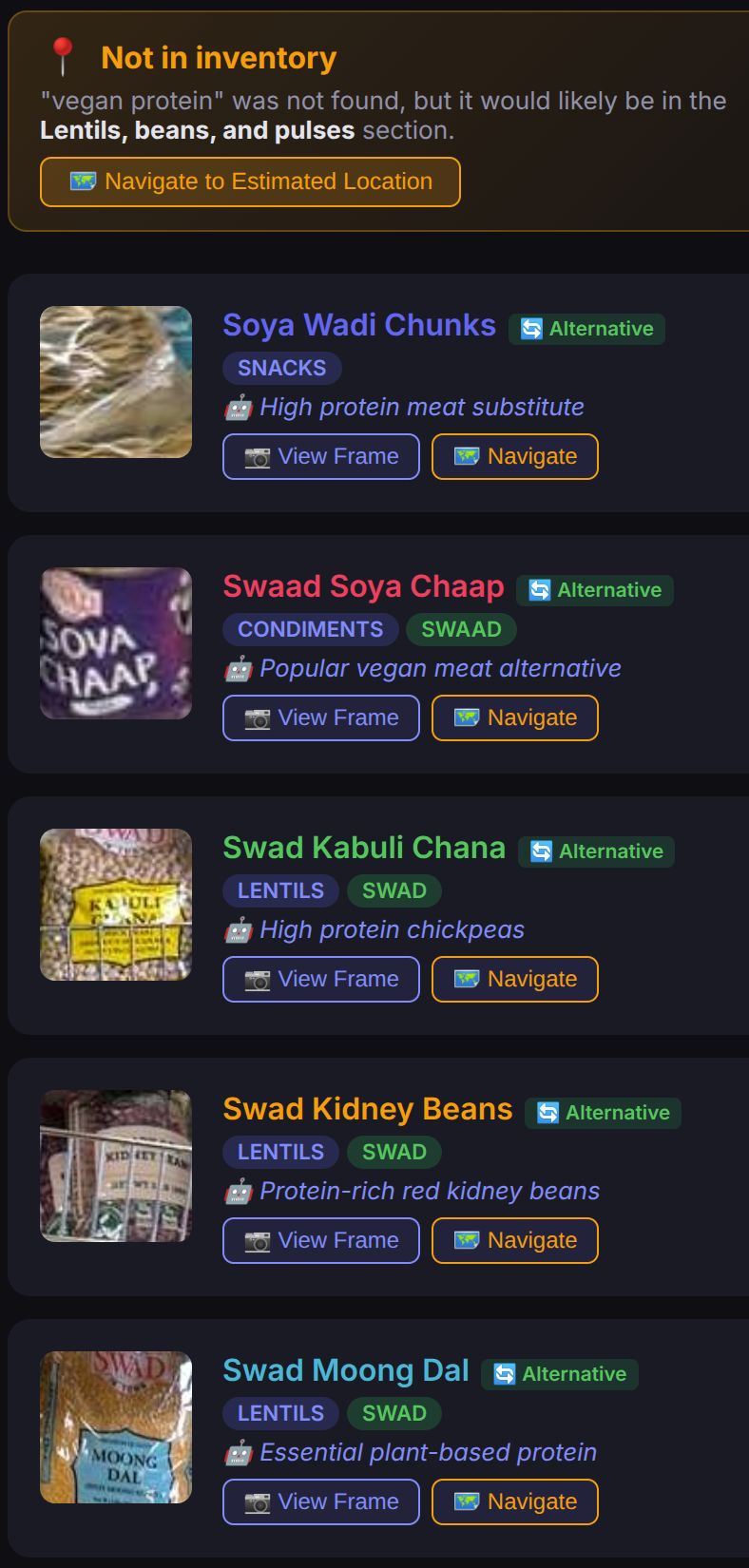

2. Intent-Aware Search & Category Estimation

The search engine supports identifying distinct physical items for multi-goal recipes (left) and graceful degradation via category estimation when specific items are occluded (right).

3. One-Shot Semantic Localization

The zero-shot textual semantic localizer accurately estimates the user pose from a single smartphone image without geometric feature matching.

Visualization of semantic aliasing. The system identifies correct physical products even if exact viewing angles across mirrored viewpoints are aliased.

| Metric | All 20 Frames | Correct Zone (80%) |

|---|---|---|

| Top-1 Error | 3.78m | 2.71m |

| Top-3 Error | 2.05m | 1.35m |

| Top-5 Error | 1.45m | 1.04m |

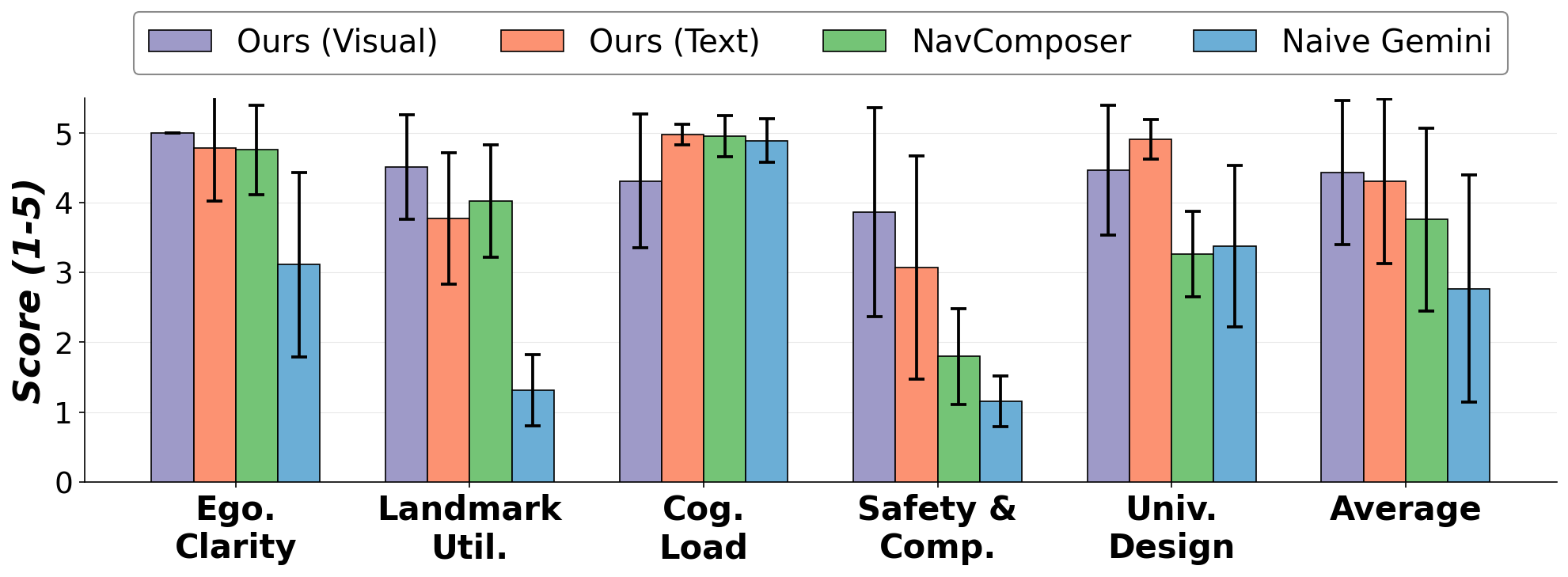

4. Visually-Grounded Spatial Routing

The generative routing engine produces robust, egocentric instructions to aid universal human accessibility and offset cognitive load.

GIST explicit topological grounding significantly outperforms raw RGB sequence models, improving Safety, Completeness, and Egocentric clarity. An in-situ ecological probe achieved an 80% navigation success rate using GIST verbal cues alone.