Shivendra Agrawal

University of Colorado

Boulder, Colorado

About myself: Ph.D. Candidate in Computer Science developing context-aware human-centered AI for real-world robotics. My research bridges Robotics, HRI, and Embodied AI to create context-aware systems that interpret semantic, social, and geometric cues. Technical expertise spans computer vision deployment, full-stack system architecture, leveraging modern techniques from probabilistic planning to Foundation Models (VLMs) for deployable embodied AI. Proven track record of publishing in top-tier venues (RA-L, HRI, AAMAS, IROS, ICRA). Award-winning educator and mentor, recognized for instructional excellence with multiple Outstanding TA and Instructor awards.

Other interests: I enjoy playing tennis a lot (like a lot!!). On good sunny days, I also like to bike and run in the lovely city of Boulder. (Bonus) Boulder Creek Path Fall view through my bike. I also like to explore local food and beverages.

Minor-flex - I have more than 60 millions views on my Google Map contributions and have received a rather vibrant pair of socks from Google. And no, that was all I ever got from them.

News 2026:

- (May 2026) Workshop Paper Accepted at ICRA 2026 SRRA!

- (Apr 2026) RA-L Paper Accepted!

- (Mar 2026) Won the Dissertation Completion Fellowship 2026

- (Feb 2026) Guest lecture on Hidden Markov Models in the Intro to AI class at CU Boulder

- (Feb 2026) Awarded Best Poster at the Annual Research Expo 2026 CU Boulder

- (Jan 2026) Invited research talk at Fairfield University

- (Jan 2026) Selected as the Next Gen Cohort for CERAWeek 2026 (Could not attend due to unavoidable circumstances)

Summary of some of my work:

Multimodal Knowledge Extraction and Spatial Grounding (In Submission): A multimodal pipeline that transforms mobile LiDAR scans into a lightweight semantic topology, enhancing spatial grounding for VLMs and enabling intent-aware search, one-shot global localization, and visually grounded language-based navigation. Project page

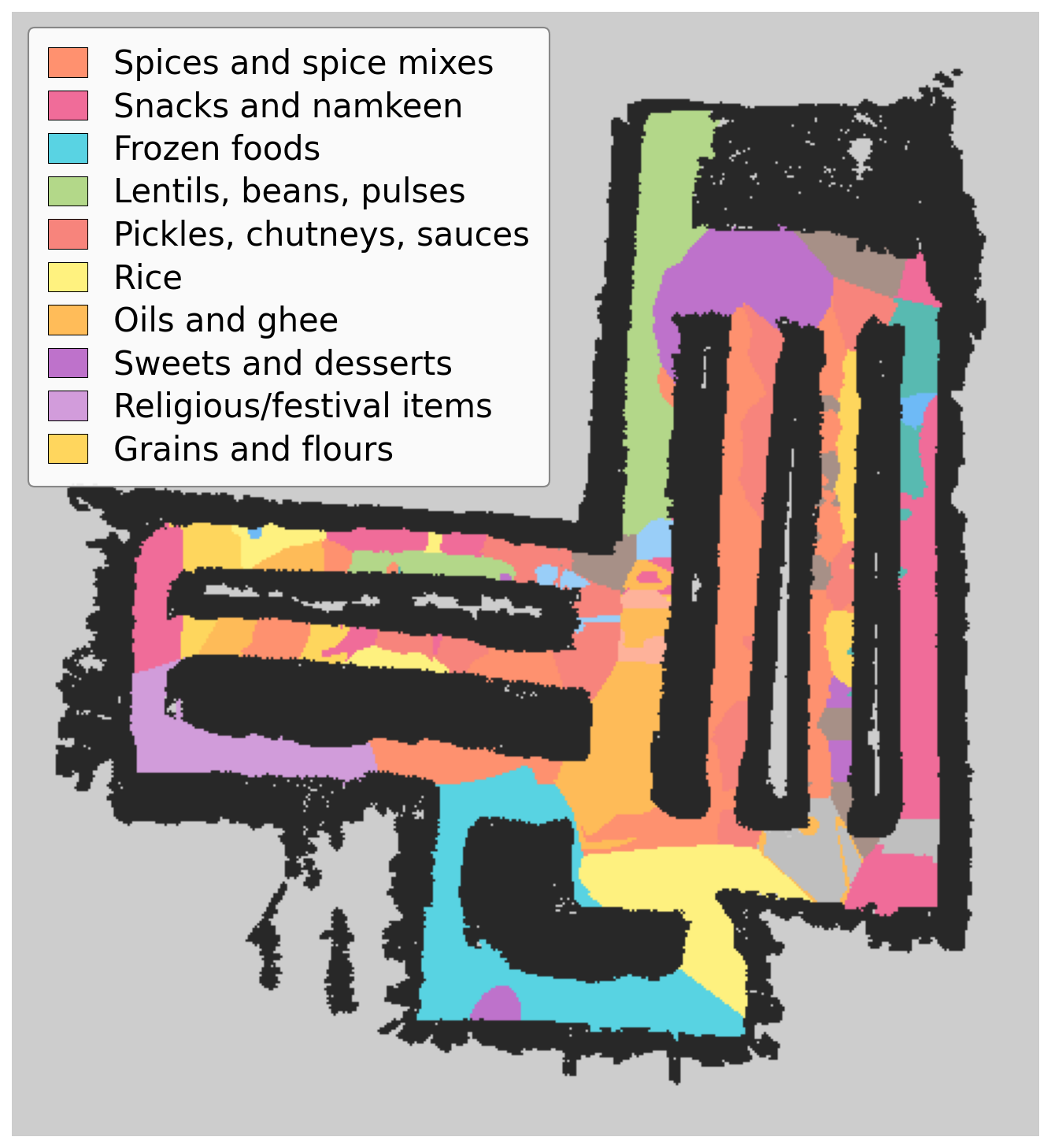

Robust Semantic Localization (RA-L): A Semantic Particle Filter leveraging RGB-D and Visual-Inertial Odometry (VIO) to achieve state-of-the-art global localization accuracy in quasi-static, cluttered environments without external infrastructure. Project page

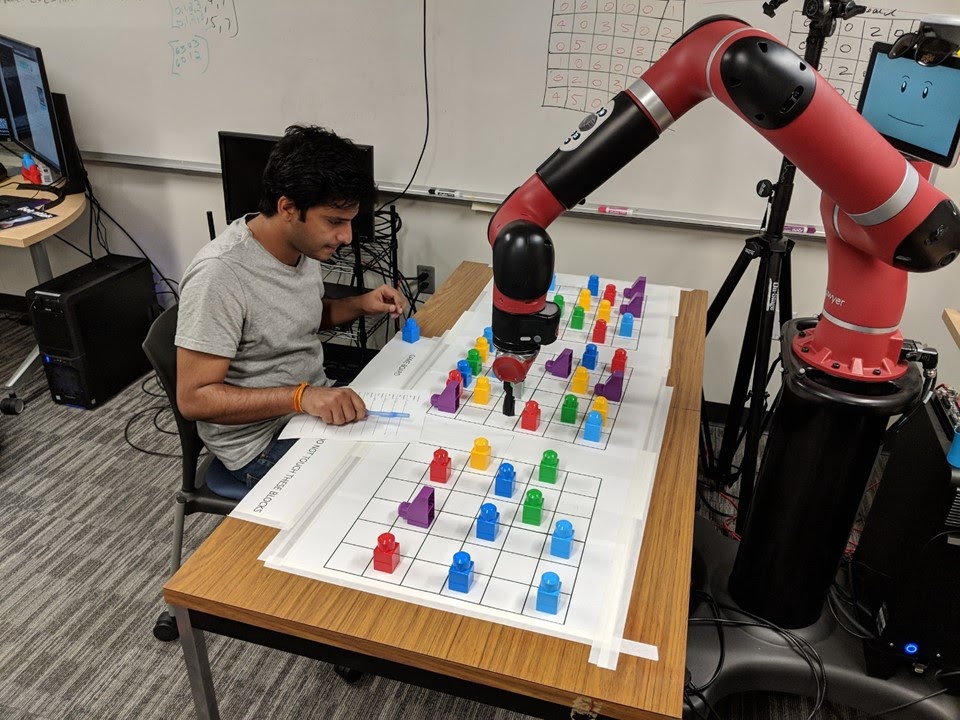

Learning Decision-Making Policies from Human Behavior (AAMAS): Learning an optimal guidance policy from human behavioral data to provide grasping guidance (featured in national media). Project page

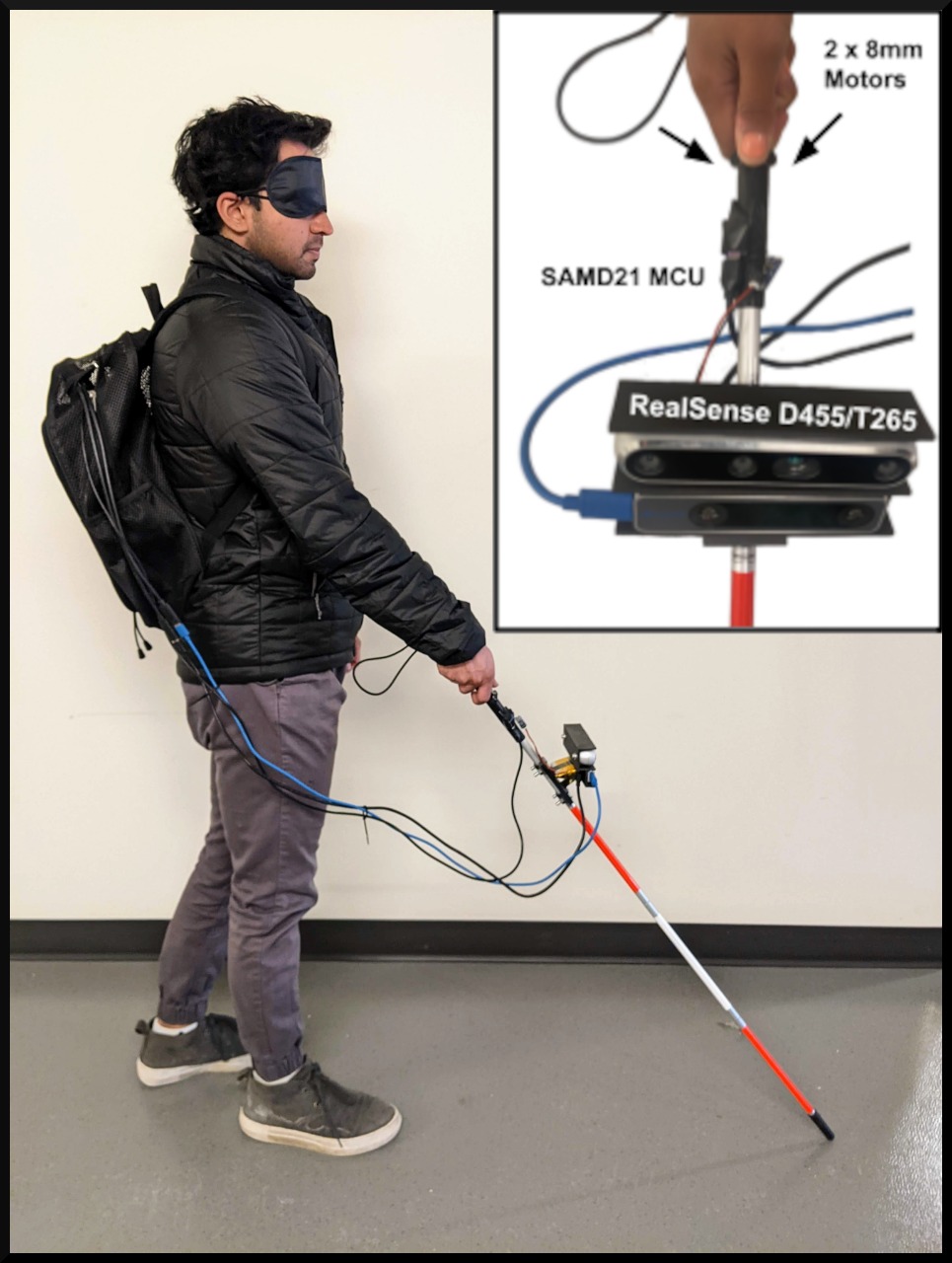

Designing Embodied AI for Social Context (IROS): A perceptive robotic cane that used models from psychology to identify socially appropriate seating locations (optimizing for privacy and intimacy). Project page

Explainable Robotic Coaching (HRI): A framework for a robot to infer a human partner's likely mental model by observing suboptimal actions to provide targeted coaching with justifications (HRI '19 Best Paper Runner-up). Project page